Dual Coding Theory: Why Your Brain Learns Better With Words and Images Together

Allan Paivio's dual coding theory explains why engaging both visual and verbal channels leads to stronger learning. Here's what the science says.

In 1971, a Canadian psychologist named Allan Paivio published a book that would quietly reshape how we think about learning. The core idea was deceptively simple: the human brain doesn't process information through a single channel. It runs two.

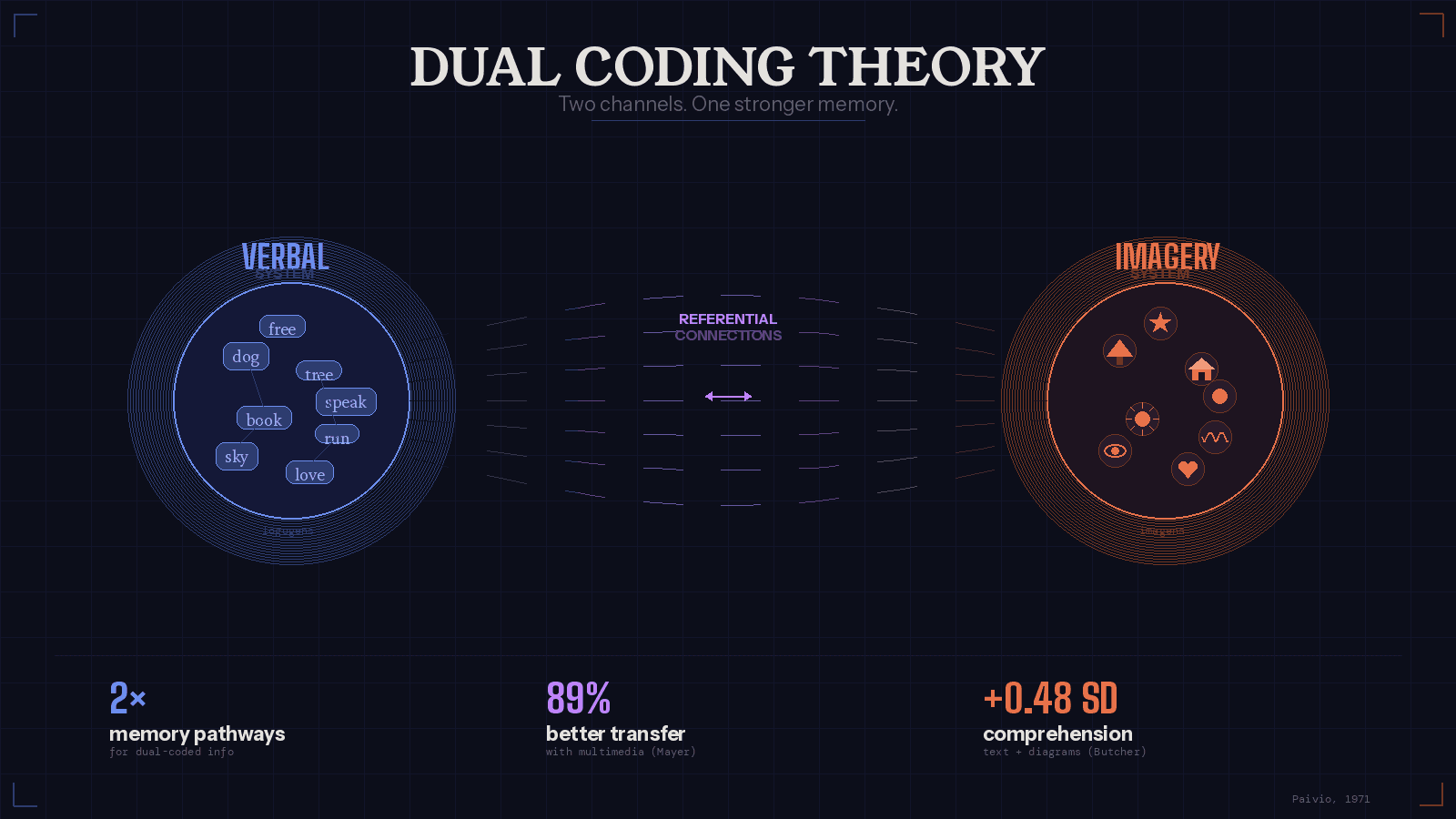

One channel handles language — words, sentences, speech. The other handles imagery — pictures, spatial relationships, sensory experiences. Paivio called this framework dual coding theory, and decades of research have since confirmed that when both channels are activated at the same time, learning gets significantly better.

The Two Systems

Dual coding theory rests on the premise that our minds operate two functionally independent but interconnected cognitive subsystems. The verbal system processes language — spoken and written words, sentences, narratives. The nonverbal system (which Paivio often called the imagery system) processes sensory experience — what things look like, how they're arranged in space, how they sound, feel, and move.

Each system has its own units of representation. Paivio coined the term “logogens” for verbal units and “imagens” for nonverbal ones. When you read the word “dog,” your verbal system activates a logogen. When you picture a dog — its shape, the sound of its bark, the feel of its fur — your imagery system activates an imagen. Critically, these two systems can operate independently, but they can also form what Paivio called “referential connections” between them. The word “dog” can trigger the image. The image can trigger the word. This cross-referencing is where dual coding gets its power.

Why Two Codes Beat One

The practical upshot is straightforward: information encoded in both systems is more likely to be remembered and retrieved than information encoded in just one. Paivio demonstrated this through a series of experiments comparing memory for concrete words (like “apple” or “table”) versus abstract words (like “justice” or “freedom”). Concrete words, which naturally evoke mental images, were consistently remembered better than abstract ones, which tend to be processed verbally only. The explanation is elegant — concrete words get dual coded, giving the brain two independent pathways back to the same memory.

This finding extends beyond single words. When participants were shown rapid sequences of pictures alongside rapid sequences of words, pictures were recalled at significantly higher rates. The reason isn't that images are inherently “better” than words — it's that pictures are almost always named internally (activating the verbal system), giving them automatic dual coding. Words, by contrast, don't always generate images, especially abstract ones.

The effect also compounds. As John Sweller, who developed the related Cognitive Load Theory, put it, working memory capacity can be effectively increased and learning improved by using a dual-mode presentation. The two channels don't compete for the same cognitive resources — they supplement each other. Engaging both systems doesn't add cognitive load. It's what one researcher described as “double-barrelled learning” — a freebie that strengthens encoding and retrieval without additional effort.

How It Connects to Baddeley's Working Memory

Paivio's framework maps neatly onto another influential model of cognition: Alan Baddeley's model of working memory. Baddeley proposed that working memory includes a visuospatial sketchpad (for processing visual and spatial information) and a phonological loop (for processing verbal and acoustic information), both coordinated by a central executive. The structural parallel is hard to miss — Baddeley's two-component system essentially mirrors the two channels that Paivio described. Together, these models reinforce the idea that our cognitive architecture is fundamentally built for multimodal processing.

What the Research Shows

The evidence supporting dual coding is substantial and spans multiple domains. A meta-analysis by Butcher (2006) found that combining text with relevant diagrams improved comprehension by about half a standard deviation compared to text alone. Mayer (2009) demonstrated that multimedia instruction designed around dual coding principles improved transfer test performance by 89% over text-only conditions. These aren't small effects — they represent meaningful gains in how well people understand and apply new information.

The implications have been tested in classrooms as well. Clark and Paivio (1991) presented dual coding theory as a general framework for educational psychology, showing that concreteness, imagery, and verbal associative processes play major roles across educational domains — from knowledge comprehension and school learning to effective instruction and even motor skills development. Research in both traditional and remedial settings has consistently supported the idea that making abstract information concrete (through images, diagrams, or spatial organization) while also verbalizing concrete information (through labels, descriptions, or narration) leads to better outcomes.

Applications Beyond the Classroom

Dual coding theory wasn't built just for schools. Its implications reach into literacy, visual mnemonics, interface design, bilingual education, and human factors engineering. Paivio himself applied the framework to explain bilingual processing — how people who speak two languages form referential connections between words in different languages and shared imagery.

The theory also underpins much of modern multimedia design. The reason well-designed presentations pair images with narration (rather than images with on-screen text) traces back to dual coding: spoken words engage the verbal system while visuals engage the imagery system, allowing both channels to work simultaneously without interference. When both channels receive the same on-screen text, they compete rather than complement.

More recently, dual coding principles have informed the design of text-to-speech and immersion reading tools. When someone reads text on a screen while simultaneously listening to audio narration of the same words, they're engaging both cognitive channels in real time. The text activates the verbal-visual pathway, the audio activates the verbal-auditory pathway, and the synchronized experience creates a richer, more durable encoding of the material. It's Paivio's theory in action, applied to a modern reading experience. Apps like Hoot Reader are built directly on this principle — by using OCR and AI-generated speech to turn physical book pages into synchronized text-and-audio experiences, Hoot gives readers a way to activate both cognitive channels from any book they already own, no separate audiobook purchase required.

Limitations and Criticisms

No theory is without its boundaries. The most common criticism of dual coding theory is that it accounts only for verbal and imagistic codes, leaving open the question of whether other representational formats exist. Emotional associations, motor memories, and abstract relational structures may not fit neatly into either channel. Some researchers have argued for propositional models of cognition that use a single, abstract format to represent all types of knowledge — though the weight of experimental evidence has generally favored Paivio's multimodal account.

There's also the question of individual differences. Not everyone benefits equally from visual or verbal strategies, and the effectiveness of dual coding can depend on the nature of the material, the learner's prior knowledge, and how well the two channels are aligned. Poorly matched images and text can actually increase cognitive load rather than reduce it — a finding that underscores the importance of thoughtful design.

Why It Still Matters

More than fifty years after Paivio first articulated dual coding theory, it remains one of the most practically useful frameworks in cognitive science. It explains why diagrams help, why concrete examples stick, why multimedia works, and why reading along with audio narration feels like a different (and often better) experience than reading or listening alone. It's a theory with an unusual gift: it tells you something true about how your brain works, and it also tells you exactly what to do about it.

The prescription is simple. When you want to learn something, don't just read about it — see it. When you want to remember something, don't just picture it — say it. And when you want to truly immerse yourself in a book, don't choose between your eyes and your ears. Use both.